A Landlord’s Guide to Using Artificial Intelligence for Rental Processes

. Posted in News - 0 Comments

By Kimberly Rau, MassLandlords, Inc.

Among all the ways landlords use technology to make operating within the rental housing industry easier, artificial intelligence (AI) is a more sophisticated tool than rent collection software or communication by text message. AI can potentially be very useful to landlords, but there are plenty of things to be aware of before you consider automating a process that typically benefits from the human touch.

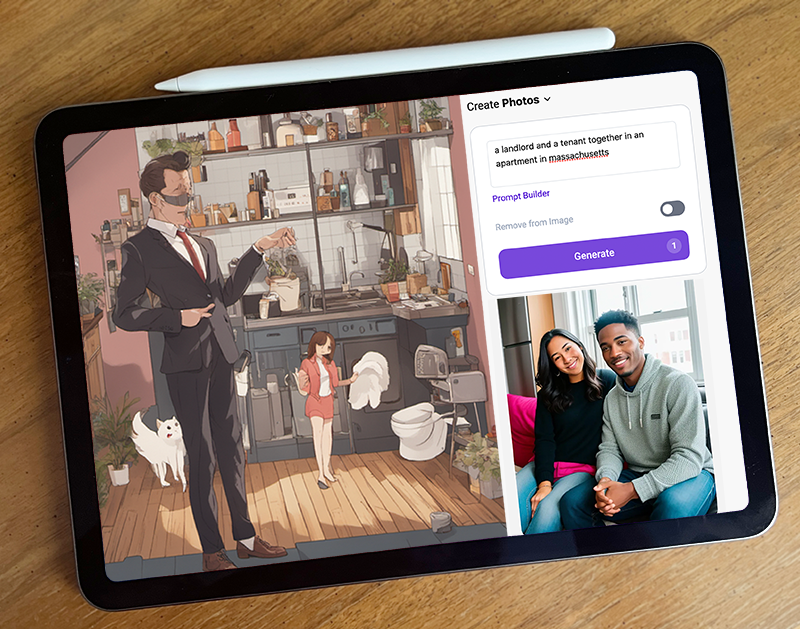

These are just two of the strange results we got when we asked various AI generators to give us an image of “a landlord and tenant in an apartment.” AI may be the wave of the future, but it has a long way to go before it can replace people. [Image License: Editorial Use AIPlayground and StarryAI, frame CC by SA J. Rau for MassLandlords Inc.]

What Is a Neural Network?

Artificial intelligence, neural networks and AI image generation have been hot topics of discussion recently, and it seems like more and more systems are being AI-automated every day. Virtual assistants such as Alexa and Siri are forms of artificial intelligence. So are customer service chat bots, content generators and facial recognition programs. Many of these programs learn and become “more intelligent” by using a neural network.

A neural network is a form of artificial intelligence that teaches computers to process information in a method that is similar to how the human brain operates. A human brain processes information by filtering it through many layers of nodes (called “neurons”) that are interconnected. When a neuron receives an “input” electrical signal, there’s a chance the neuron will do nothing, but there’s also a chance it will turn on and begin emitting its own signal. Each layer takes the information at hand and evaluates it in a way that informs what the next layer does, until finally, you are at an end state where the neurons have come together to produce an action or behavior (or, potentially, no action). This video offers a good explanation of how neural networks operate by having one identify hand-written numbers.

An artificial intelligence network represents neurons as numbers. When the network returns a wrong answer (one that does not match what you have requested), it can go back through its layers and tweak its own neuron numbers until it “learns” how to provide the correct information.

You can use various AI platforms to generate different types of results. Some AI is trained to create entirely new content (essays, forms, images). Others may be able to evaluate or edit existing content that you feed it. It sounds futuristic, but although AI is here to stay, it has a long way to go before it can replace people entirely.

So, What’s Wrong with AI Generated Content?

Neural networks and AI-generated content have some good applications. For instance, AI technology can help improve internet accessibility by quickly creating alt-image descriptions for people with low or no vision who use screen readers to access content.

AI can also make it easier to generate text from spoken words. For people with hearing difficulties, AI technology can easily transcribe audio into text. Additionally, current speech-to-text generation has problems with recognizing the difference between homonyms (your and you’re, for example), and being able to filter out non-speech sounds. AI technology can help eliminate those accessibility barriers.

There are ways that AI technology may be able to help you with your landlording practices, and we’ll cover those. But there are many ways in which AI-generated content falls short of excellence, or even reaching the threshold of “good,” and you should be aware that it can be used against you.

Pro: AI Can Scan Your Lease and Other Forms for Errors

Starting with something good: Artificial intelligence could keep you out of court. If you are not sure whether your forms and notices for your rental practice comply with Massachusetts housing law, artificial intelligence could be a good resource.

Of course, our first recommendation is to always use MassLandlords forms, which are written by actual people without the aid of artificial intelligence. These forms will keep you compliant with our complicated housing laws. But if you have older rental agreements, or have opted to draft certain things on your own, without an attorney’s guidance (we don’t recommend you do this), a program like ChatGPT might be able to assist. In theory, ChatGPT could read through your rental agreement or other form and, with the right prompt, point out anything in it that runs afoul of the law. Will it always be accurate? We can’t guarantee that. ChatGPT, like most of the AI generation we’ve seen, is not infallible. But it could alert you to verbiage or clauses that merit your attention to ensure they’re lawful.

Pro: Chat Bots Could Help You with Tenant Inquiries

When you list an available rental unit on Zillow or similar sites, you are most likely inundated with inquiries. It’s a tight housing market, yes, but there are also scammers and bots that simply ping you with no interest in your specific property.

If you have just one rental unit, then you may be able to manage the inquiries you’ll receive on your own. If you only get a couple hundred messages, you can probably weed through the junk. But if you have multiple properties available at a time, you can see how that could quickly get out of hand. Chat bots have been used with some degree of success for large property management firms. You may be able to use a similar program to help you sift through messages, and direct people to answers for the most common property questions. (Just don’t become non-responsive and assume a chat bot can handle everything your prospective renters need.)

Artificial Intelligence neural networks are designed to mimic how the human brain processes information, however, results may reflect bias or improperly contextualized information. (Image License: Kommers for Unsplash)

Con: AI Reflects Bias, Should Not be Used for Tenant Screening

You’ve probably seen an example or two of some great AI-generated artwork. Just give the program a prompt (“an abstract painting of the ocean with lots of blue and green swirls”) and let the results amaze you. The more detailed you make your request, the closer your image will be (is supposed to be) to what you’re imagining.

But for all their acclaim, AI neural networks seem to miss the mark a lot. We asked one platform to generate “a landlord and tenant in an apartment” and got a single person sitting on a couch against a wall with a single random painting in the background. We got more specific, and requested “a landlord and tenant together in an apartment,” hoping that the word “together” would further imply the presence of two people, possibly signing a lease or taking a tour. Instead, we got two people snuggling on a sofa, beaming directly into the camera. We hope it goes without saying that this would not be appropriate landlord–tenant behavior. Often, if we got photorealistic results, the people had strange warping to their faces, extra fingers or hands, or other unsettling features.

Also, AI networks must have examples to learn from. Too often, those examples are from art that is not public domain or available for commercial use. As AI learns from stolen material, its results continue to contain elements of that unethically obtained content. Hollywood has even suggested using AI programs to continue to use artists’ likenesses in films well after their paid contracts are up, a talking point that made the news after SAG-AFTRA went on strike this summer. Six years ago, AI was used to digitally “resurrect” actor Peter Cushing for a Star Wars movie.

Finally, and most concerning, AI images reflect bias because they are learning from the images they already have, and those images may not accurately represent reality. AI networks have access to the images that are posted to the internet, and the internet is not known for being a well-balanced place. For instance, when we asked for an image of a “landlord,” we got a lot of men, unless we specifically requested a “female landlord.” While more landlords are male, it’s not by a terribly large margin (one statistic shows that in the United States, 55.7% of landlords are men, and 44.3% are women, hardly a staggering lead). And it gets worse. One MIT student uploaded a relatively casual picture of herself to an AI platform with the prompt to make the headshot look more professional. Instead of swapping her sweatshirt for a button-down or changing the background, the platform made her Asian features look much more Caucasian.

This problem is widely recognized, and there are ways to combat AI bias (being aware it exists at all is the first step). But there’s still a long way to go.

So, how does this relate to landlording? Maybe you aren’t running your prospective tenants’ identification through an AI image database, but if AI-generated images can reflect unintentional bias, AI tenant screening tools can, too.

There are many websites that advertise AI-automated tenant screening services. Maybe that sounds great to you. Properly screening tenants can be a time-consuming process, especially in Massachusetts, where anti-discrimination laws are strict. However, with AI, the neural network you’re using may not be able to place certain data in the proper context, which could lead to renters being rejected for bogus reasons.

For example, one prospective renter in California was rejected from a senior living community because an AI screening tool labeled him “high risk.” The red flag? A prior conviction, except the conviction was for littering, and didn’t even belong to him. The program had connected his name with the profile of someone with the same name, but younger, and living in an entirely different state. Imagine losing your shot at housing because a stranger thousands of miles away didn’t properly dispose of their garbage.

We suggested earlier that a chat bot could help very busy landlords with initial property inquiries, but would not recommend going more in-depth with AI for tenant screening at this time, especially not for anything relating to images. Think of how many otherwise great candidates you might be missing out on because an AI program filtered them out for incorrect, or incorrectly interpreted, data.

Conclusion

Some people rush to be early adopters of the latest technology. Others prefer to stand back and let the kinks get worked out before they take a dive into something new. We’ve seen how artificial intelligence can help evaluate information, but also know it can create problems when the network isn’t quite human enough to properly analyze the data before it.

The other issue is that AI is cheap to use. AI can “learn itself” on less coding than other programs, so the market is rushing to adopt it as a cost-saving measure. But “cheap” doesn’t always mean “good.”

We recommend you always use rental forms that have been written and reviewed by human beings. Use our helpline if you have questions about landlord–tenant issues. If you do use AI for some aspect of your rental business, make sure you carefully evaluate the information it gives you. If you’re unsure about something you’re doing as a landlord, contact your lawyer, not a chat bot.