Using AI Rental Forms Can Lead To Longer Evictions and Extra Time In Court

| . Posted in News - 0 Comments

By Kimberly Rau, MassLandlords, Inc.

More landlords are utilizing artificial intelligence (AI) to create and file rental notices with the court, according to Peter Shapiro, who works as a mediator counselor for the affordable housing nonprofit Just a Start Corp. Just a Start is contracted with the Mayor's Office of Housing in Boston, providing mediation services. Shapiro also offers his services as a MassLandlords Helpline counselor and runs a recurring office hours session the second Wednesday of each month.

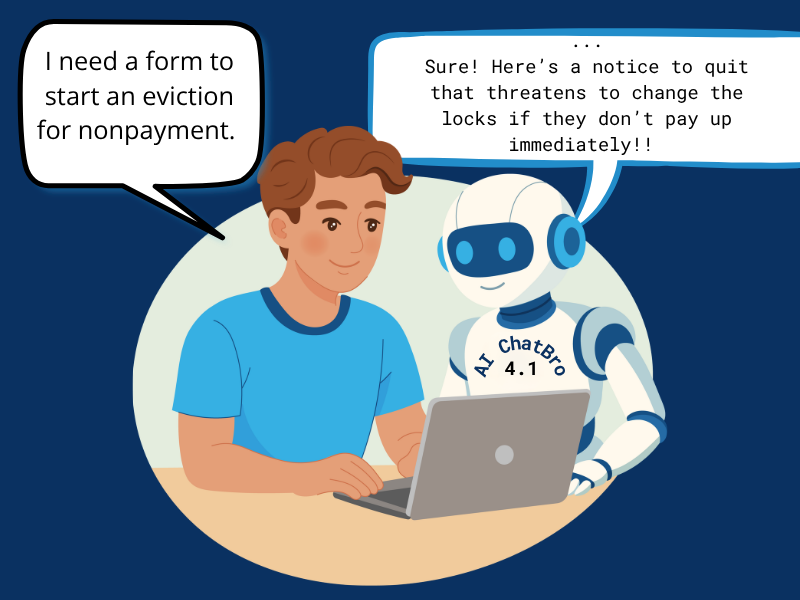

Statistics on AI hallucination rates vary, but an error like this could result in your eviction case being thrown out, or jail time. Changing the locks is illegal. (Image License: CC BY-SA 4.0 MassLandlords, Inc.)

Shapiro called the uptick in AI usage “alarming.” Using AI platforms such as ChatGPT or Google search results for rental forms and notices can lead to errors that can delay or cancel eviction or other court processes, costing you additional time and money.

It’s no secret that AI usage is growing, despite concerns about environmental and learning impacts, among other issues. An April 2026 report from market research company Ipsos shows half of all Americans reported using AI at some point in the prior week. Usage varied from writing and information lookup to creative work and professional applications. Earlier this year, Massachusetts became the first state to use ChatGPT across its executive branch.

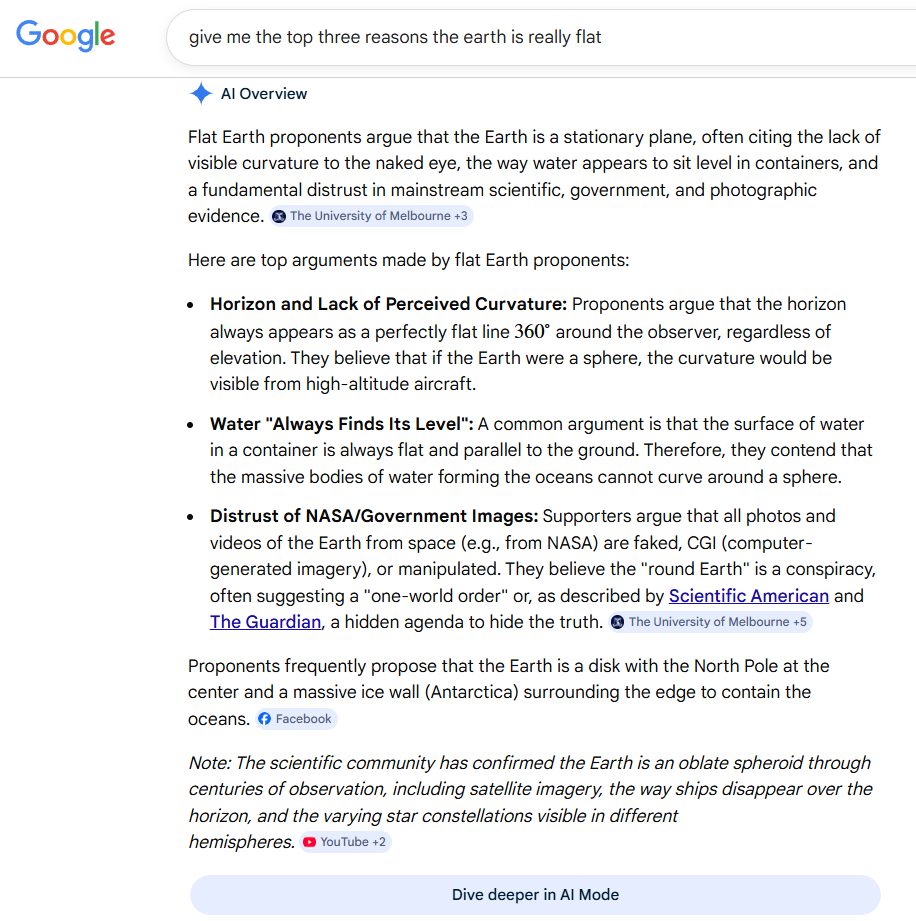

If you ask Google to explain why the earth is flat (spoiler: it’s not), it will give you some conspiracy theory talking points and then, at the very bottom, a disclaimer about how scientists agree the earth is round. How many people looking for quick answers will read the whole thing? (Image License: Google)

How AI Works

Broadly, AI platforms work by prediction. When you type or speak a question or prompt into a search engine, the bot scans all available content and uses pattern recognition to provide the most likely correct response.

The implications of this are big. If work must be original, or technically very precise, the most commonly available information is not necessarily going to satisfy the prompt. There are some safeguards put in place by developers. In general, when you use AI properly, the bot will not tell you that the earth is flat, or give you instructions on how to make illegal weapons.

But what happens when AI is wrong? These errors, also known as “hallucinations,” go beyond goofy images where people have six fingers. If you’re using it to write a grocery list, maybe you end up forgetting to buy a key ingredient for your dinner. That’s a mild inconvenience in the grand scheme of things. If you’re using it for your business, however, an error can be a much bigger problem.

It’s no exception with rental forms. An error on a notice to quit, security deposit receipt or other form that must contain legally mandated verbiage can result in serious consequences. The potential downside far outweighs any perceived cost or time savings from using AI in the first place.

The Wrong Wording Can Be a Problem In Court

Shapiro says that many landlords will put a basic prompt into ChatGPT or other AI platforms, like “eviction notice.” The platform often returns notices to quit with missing or improper verbiage.

“I am now regularly getting landlords who use ChatGPT to generate notices which often don’t give the required information that [the law] states need to be in a 14-day or 30-day tenancy notice,” he told MassLandlords.

This can result in eviction notices that stand a high chance of getting thrown out of court, for example, a notice to quit that is served mid-month but tells the renter they have 30 days to vacate the premises.

Shapiro says landlords will often focus on the damages owed in an eviction case, but overlook the procedural requirements that allow a case to move forward. Without an understanding of those, the case may be delayed. In some situations, a judge may require a landlord to start over from square one, adding additional time and expense.

While a landlord is busy asking an AI chatbot for an eviction form, their renter may be busy acquiring legal aid services. Shapiro noted that people who work for such services have checklists they are trained to utilize, looking for procedural and substantive errors that can delay or dismiss cases.

What initially felt like a time-saving measure can cost a landlord thousands of dollars.

AI Hallucinations Can Lead to Bigger Issues

It’s not just smaller landlords who can get caught in the AI trap. Cases involving legal firms submitting court documents with AI errors are cropping up.

In March 2026, the Connecticut State Supreme Court received its first AI hallucination case, after Wallingford law firm GLG Law filed court documents in an eviction case that contained AI-generated inaccuracies. Though this is the first case before the state supreme court, it’s not the first time such a case has been heard in Connecticut. A New Haven judge fined a lawyer in 2025 for filing what she determined was a computer-generated brief.

“In the Connecticut cases…the lawyers used software that produced what appeared to be somewhat clumsily written but otherwise solid legal briefs,” the Hartford Courant reported.

“A look beneath the surface revealed citations that were erroneous or computer-generated fiction,” it continued. The article stated there was no evidence the lawyers had put misinformation in the documents on purpose, or to gain an advantage in the case.

The errors were discovered by Yale law students, who worked with the Jerome N. Frank Legal Services Organization to submit the brief to the supreme court urging them to dismiss the eviction case.

Attorneys from GLG Law said they used AI to organize, format and review the brief they submitted in the eviction case.

“Unfortunately, Counsel did not notice that AI had intuitively made changes to the brief prior to filing,” they wrote in a memo to the court. The outcome of the case was still pending as of press time.

Recommendation: Use Our Rental Forms

If you’re a MassLandlords member, the good news is, you don’t need to write your own notices to quit, rental agreements or anything else. We have a full set of rental forms for every stage of the rental process, from tenant screening to lease termination.

These forms are written by humans, regularly updated to reflect changes in the law or best practices, and don’t contain made-up legal information that will get them thrown out of court. They’re available in English and Spanish, and are free with your membership.

And, while we will always advise you to consult with an attorney before making any legal moves or filing any court processes, we suggest you ask if, and how, they utilize artificial intelligence in their business practice.

This article was entirely written by a human being with a journalism degree and decades of published work under her belt, who would be happy to never see another piece of AI-generated “writing” again.